Augmented reality HUD: The next step-up for smart vehicles

Driving in the dark, inclement weather or on rough terrain can be quite a challenge, but what if one could see through a blind bend, guide a vehicle safely in incessant rain or thick fog and effortlessly tackle winding country roads. The ability to do just that is very much within reach. The fusion of Augmented reality (AR) along with the latest innovation in head-up display (HUD) is set to revolutionize the motoring experience by setting new standards in safety and comfort.

As cutting-edge automobiles come off the assembly lines, packed with sensors, cameras, and intelligent driving aids, HUD, once limited to military and civil aviation, is making its foray into personal transportation. The technology, however, is still in its infancy and the current crop of automotive HUDs are relics of the pre-digital era of driving, before cars were connected to their surroundings, other road users and the internet.

Fusing AR with HUD

Based on bulky conventional optics and restricted data processing, today’s HUDs can only project tiny images on the windscreen, directly above the steering wheel, providing rudimentary information such as direction and speed. They fail to take advantage of data generated by the sensors to create genuine human machine interfaces (HMIs).

It also suffers from a major drawback. The images projected by a standard HUD compel drivers to focus their pupils at a very short focal distance of only a few meters, potentially distracting them and making it almost impossible to simultaneously look at the HUD and pay attention to the road ahead.

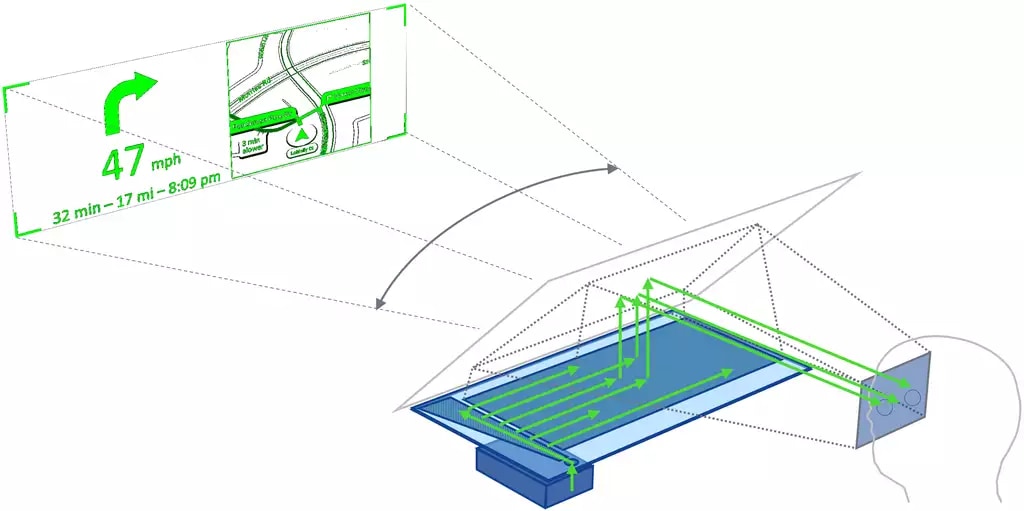

A user-friendly AR HUD, on the other hand, would be able to project virtual images - graphics and text - at multiple focal distances, creating an illusion of the cues being a natural extension of the real world. It would perform substantially better on other fronts such as navigation, hazard avoidance, location updates and spontaneous interaction with physical objects. By placing graphics into the driver’s field of view (FOV), AR HUDs will significantly boost situational awareness.

For instance, advanced driver assistance system (ADAS) alerts are generally conveyed by an audible alarm or a blinking symbol. An AR HUD can identify a potential safety hazard, such as a pedestrian or a piece of debris, mark it for the driver and track.

A user-friendly AR HUD would project virtual images at multiple focal distances.

“Augmented reality HUD allows the driver to be more connected and aware of his or her surroundings, whereas conventional HUDs mostly just show information about the car,” says Heikki Harju, Director, Nokia Tech Ventures.

Diffractive optics

The biggest obstacle to combining AR with HUD in its present configuration is the size requirement of conventional optics, imposed by fundamental physics. Hence, if one wants to increase the field of view or image size, the physical dimensions of the HUD would increase exponentially, making an AR-capable unit too bulky to be accommodated behind the dashboard. This is where the diffractive optics of Nokia’s waveguide technology comes in.

Marja Salmimaa and Toni Järvenpää, researchers at Nokia Bell Labs, have developed large waveguides with nanoscale gratings, using high refractive index materials that help pave the way for AR HUDs. The ground-breaking technology, already employed in wearable AR glasses would substantially increase the virtual image size and project to infinite or multiple focal distances without changing the volume of the unit itself.

Nokia Bell Labs is no stranger to diffractive optics and has done considerable research on the subject for the last two decades, producing patents that formed the bedrock for Salmimaa and Järvenpää’s work.

“Diffractive optics enjoys certain other advantages like doing away with special wedged windshields, issues related to incompatible sunglass polarization and solar heat concentration,” says Järvenpää.

Priming cars for next-generation HUDS

Although the benefits of AR HUDs are obvious, the legacy technologies used in present-day cars may prove to be an impediment.

Ordinary HUDs are linked to control units with low computing power that lack the capability to process high-velocity data at low latency, which means they cannot keep up with the changing environment of a fast-moving vehicle.

Furthermore, these control units are poorly integrated with other components, limiting the kind of content that can be displayed. Low-speed connectivity may cause further latency for any information coming from the cloud. Fortunately, all these shortcomings are being addressed by the automotive industry.

Artificial intelligence could prioritize situational and contextual content in AR HUDs.

Aside from the equipment, there is a significant lack of content that is crucial for a true AR experience. Finally, the absence of an intuitive user interface (UI) that can be used without taking eyes off from the road. Since HUD is not a touchscreen and the physical buttons are located elsewhere, voice control can help in interacting with the car. The latter has already started to appear on some models, enabling satnav and mobile phone control, entertainment and limited data display.

Artificial intelligence (AI) could play an important role by prioritizing situational and contextual content which would cater to what the driver needs to see. It may rely both on pre-defined rules or deep neural networks that have tracked average driver behaviour or of the person sitting behind the steering wheel. The technology could ensure that the driver is not overwhelmed by information which might have a bearing on safety and prove detrimental to decision-making and reaction times.

What comes next

While traditional HUDs have improved the driving experience, the advent of AR HUDs is truly transformational. A seamless meshing of electronics, software, and optics, it could become the most important screen in the car. As autonomous driving takes center stage, controlling the vehicle will be more through conversation and gestures as opposed to micromanaging the different buttons and dials.

Again, AR HUDs can help in the understanding of ADAS with the system taking on more tasks related to vehicle control and gradually easing drivers into self-driving cars.

“Digital experiences enabled by the right user interface technologies are key to success. The augmented reality HUD promises to change automotive user interfaces and the in-car experience. Future HUDs will provide the driver an ultimate UI to perceive and react to a highly dynamic external environment,” says Salmimaa.

Additional reading: Augmented reality HUDs and the future of smart driving

Learn more: Nokia Bell Labs

Major benefits of AR HUD

- Enables sufficient field of view to overlay AR content

- Virtual image distance (VID) at infinity or at multiple distances

- Flat design and reduced space requirement

About Nokia

We create technology that helps the world act together.

As a trusted partner for critical networks, we are committed to innovation and technology leadership across mobile, fixed and cloud networks. We create value with intellectual property and long-term research, led by the award-winning Nokia Bell Labs.

Adhering to the highest standards of integrity and security, we help build the capabilities needed for a more productive, sustainable and inclusive world.

Media Inquiries:

Nokia

Communications

Phone: +358 10 448 4900

Email: press.services@nokia.com