Autonomous networks

Build networks that sense, think, and act

What is Nokia’s end-to-end autonomous networks strategy?

We build networks that sense, think, and act across every layer, from the underlying infrastructure to the apps and services you rely on. AI and automation is built in – every step of the way – to simplify complexity and advance the autonomy of your network. In fact, Level 4 automation is already delivering real business outcomes to some of our customers today.

Network demands are changing quickly, making the shift to self-optimizing, autonomous networks a practical strategy today. And backed by Nokia Bell Labs’ innovation, we can help guide the transition to self-evolving networks tomorrow.

How does an end-to-end autonomous network work?

Our Sense, Think, Act framework powers your entire network, seeing everything, using AI to predict issues , and acting autonomously. Across 5G, IP, optical, fixed, and cloud domains, our trusted network solutions continuously improve the performance and customer experience your network delivers.

Sense with observability

360° observability across 5G, IP, optical, and fixed networks gives you full-context awareness, actionable insights, and a foundation for modernizing operations across every domain.

Think with AI/ML

AI/ML spot anomalies, predict bottlenecks, and act instantly. Cloud-native intelligence drives insights, while automated policies keep every corner of your network running flawlessly.

Act with automation

Closed-loop automation and cross-domain orchestration across all vendors and clouds ensure secure, Zero-X operations. Your network acts autonomously, constantly improving customer experience.

What sets Nokia’s end-to-end autonomous networks strategy apart?

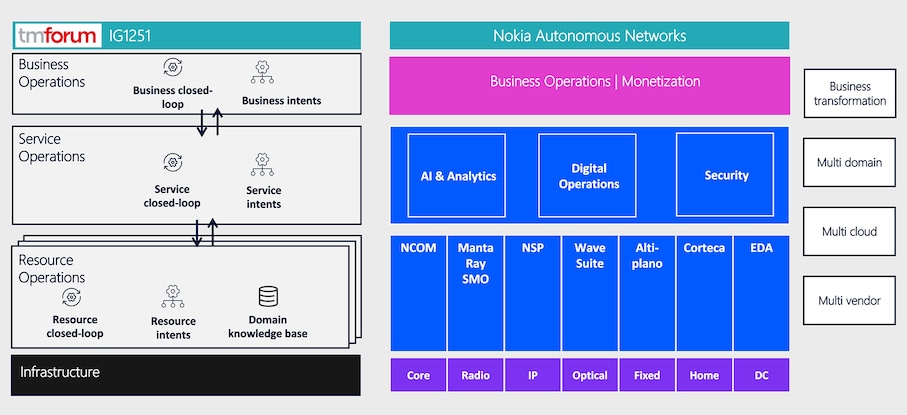

With field-proven expertise in both networks and software, we create solutions that work across fixed and mobile access, IP, optical, data center, and service management. This knowledge allows us to build reliable networks that sense, think, and act using AI and automation. Aligned with TM Forum’s Autonomous Networks framework, our solutions adapt to your network across any vendor, domain, and cloud environment and deliver outcomes that matter to you.

We are the only supplier to have already reached Level 4 of TM Forum’s framework, with industry-leading AI and automation running in large-scale, multi-supplier networks since 2019. This means you can move forward with confidence knowing your operations are ready for the next level. And with our open application ecosystem, you gain the flexibility to integrate with existing systems, innovate on your terms, and evolve at the pace your business demands.

Autonomous networks stories

Autonomous networks in the real world

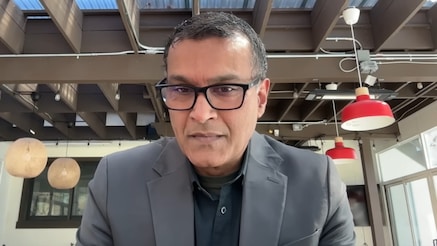

How Nokia views the future of network automation

Learn more about how automation, AI, and human expertise are coming together to simplify network complexity and support the evolution toward autonomous networks. This Fierce Network interview explores the challenges operators face today and highlights practical approaches to building more resilient, efficient, and future-ready network environments.

Related topics driving autonomous networks acceleration

Network automation

Simplify and transform the way you build, control and operate your network.

Artificial intelligence

Build AI-powered networks that meet the demands of AI applications.

Cybersecurity

Industry leading end-to-end 5G security.

Core automation

The road ahead is connected. Make ground-breaking technology work for everyday life.

Learn more about autonomous networks

Blog

Blog

Blog

Blog

Blog

Blog

Blog

Video

AI for Autonomous networks: UScellular & Nokia partnership

Ready to talk?

Take your network to the next autonomy level.

Discover how Nokia’s end-to-end network solutions will advance your autonomous networks roadmap.

Please complete the form below.

The form is loading, please wait...

Thank you. We have received your inquiry. Please continue browsing.