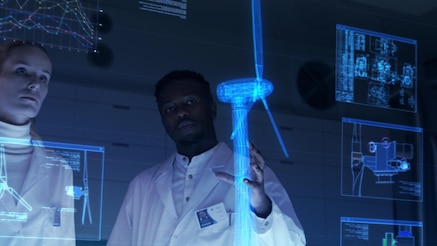

Rise of the industrial metaverse

Real Conversations podcast | S4 E16 | November 10, 2022

Biography

Luis Bollinger is the co-founder and CMO of Holo-light, a company that specialized in immersive technologies in augmented and virtual reality.

The popular view of the metaverse is a vast immersive space for consumers which simply isn't ready yet. The reality is much more nuanced. In fact, there are big opportunities in the industrial metaverse which are already playing out. Luis Bollinger of Holo-light discusses the industrial metaverse and describes some of the use cases he's seeing right now.

Below is a transcript of this podcast. Some parts have been edited for clarity.

Michael Hainsworth: The consumer metaverse gets a lot of the headlines these days, but applications and technologies powering the enterprise and industrial metaverse are doing the heavy lifting when it comes to realizing the fourth industrial revolution. So, what are those metaverse use cases? Holo-Light is building on existing technologies to bring us something powerful. An immersive streaming platform that likes to call itself the Netflix for XR apps. I began by asking co-founder Luis Bollinger to tell us how he defines the metaverse.

Luis Bollinger: Michael, it's a good question. I think our definition is more or less driven by what we see on the market and what we hear when we talk to the enterprise companies we talk to. The metaverse itself is like a digital space in which human beings can interact with each other, they can interact with the digital world, or with digital objects. It's not just virtual reality, it's the combination of real and virtual worlds. The interaction with physical objects in combination, using AR and VR in a more advanced way.

MH: While virtual reality does get a lot of the attention in the extended reality universe, the metaverse, 90% of that industry spending though is expected to be on augmented reality. Does that jive with your view?

LB: Yes, we see both technologies have their own use cases, and it's really a question of the use case, which technology fits better. It's natural to see VR used on a broader range because it's been on the market longer, people have more experience with it, the price point is different, it's cheaper to work with this technology, so it's easier to roll it out from a cost perspective. But the AI use cases have a huge potential, and I think the potential is even greater here and this is what we also see when we talk to companies.

MH: The consumer metaverse is the one that seems to be grabbing the headlines right now. How much bigger do you see the industrial and the enterprise metaverse being over the consumer one where we're going to be playing games and watching bands perform virtually and things like that?

LB: Yes, so the consumer metaverse is something I'd like to dive deeper into, but I would say today the reality is companies are the ones driving the augmented and virtual reality use cases. They are heavily investing in these technologies. They have use cases and they have also critical use cases, where on the consumer side we are maybe still missing these killer use cases that drive the technology and help people to adopt it.

The industrial metaverse is here today. The technologies that we have are basically the combination that are able to form the industrial metaverse. I think the consumer metaverse is something which will evolve in parallel, but it will probably take a bit more time until we really see the consumers themselves out there in the metaverse.

MH: That's an interesting point. Meta just recently announced a new virtual reality headset that's $1500 U.S. dollars. The argument seemed to be that this was more about a professional work environment as opposed to playing video games. It's a harder sell in the metaverse if it's all about playing video games. But if you can actually get something done in it, that might be an easier sell.

LB: Yes. Over the last few years, the Quest 2 device got very positive feedback in terms of comfort, in terms of quality, but Facebook and now Meta, always had the issue that their technology was not meant to be used for enterprises because you needed a Facebook account, for example, to log in and such things. And they improved these things over the last months. They really wanted to make a change in the strategy, and I think this new device, in combination with the infrastructure they provide, will now really be an option for enterprises in the future. We are really looking forward to using this device also on the VR side.

MH: How would you define the industrial metaverse versus the enterprise metaverse?

LB: Honestly speaking, we don't really differentiate so much between these two terms, because we think it's anyway a bit difficult for people to really understand what the metaverse is and our intention is to simplify things and not make it too complicated. But if I would have to choose any difference there, then I would definitely say industrial is a focus for highly industrial companies, from the automotive sector, mechanical engineering, maybe aerospace. These companies have very often use cases that they use the technology internally to maybe train their people to do engineering on their products.

While the enterprise metaverse is maybe a bit more open, maybe including suppliers and customers a bit more than the industrial metaverse in the beginning. That's how I would differentiate a bit, but I can imagine that these two things, they are more or less the same and it's probably hard for most people to see a difference.

MH: It strikes me that the enterprise metaverse is more about connecting people, whereas the industrial metaverse is more about taking what you're currently doing to a whole new level.

LB: Collaboration is anyway one of the key factors of the metaverse because we want to connect people with each other, and I think the metaverse provides the infrastructure for that. It's a key of maybe all terms of metaverse, but I agree with what you say, the enterprise metaverse maybe pushes this in a much, much broader and larger way.

MH: Well, let's talk about some of those use cases. In your work with BMW on augmented reality for manufacturing, how much does AR reduce product development time to market?

LB: This is really a great use case and I think it clearly shows the value of the technology. Cars are not simple; they are very complex. They consist of many different parts and so the development process takes a lot of time and they're producing a lot of prototypes. They have to make a lot of design decisions and by using augmented reality to visualize prototypes at an early stage, to discuss them with colleagues, to check assembly processes and all these things, they are able to make design decisions up to 12 months earlier than before.

And this is heavily influencing, obviously, the time to market side, especially in the automotive industry with all these new players in the market like Tesla, for example. It's important for the existing ones to really make sure that they can stay competitive. It's also influencing the cost side, because if you can exchange a real physical prototype to a fully virtual one, at least for some stages during different iterations in the design process, then you can save a lot of money because I think everybody can imagine that a chassis and engine and all these things, they are very expensive.

MH: That's amazing, because I know that it can take anywhere from seven to eight years to develop a car from the idea in an engineer's head to something that's sitting on a lot to be sold to the consumer. You shave a whole year off that development process. That must be an incredible cost savings.

LB: It's one of the reasons why we are able to scale also the solution inside BMW. I would say that what's important to mention is that, obviously, there are different parts of the car and in some areas, you can save more time than in other areas. But what's clear is that a virtual visualization, in real size, with different opportunities to really work on the model, is making things easier and helps people to make the design decisions with more confidence. And also, you avoid errors because you can see things just much better than on a 2D screen.

MH: How is Holo-Light working on a healthcare metaverse? You've got a customer in Hatch?

LB: Yes. Hatch is a very cool company from the U.S. They are basically translating 2D scans of all kinds of the body, all types of the body, into 3D objects. And they want to help surgeons in the end to prepare better for surgeries, while there is a lack of surgeons, the number of surgeries is increasing, and this is a problem. What they have done is they have a training application to train surgeons on how to improve, making things faster, or how to plan the surgery itself. And they plan to have live support in the end.

And these two applications, they want to spread across the whole country, across the whole globe, and therefore they need the right infrastructure for the AR applications and the VR applications which are connected to this. With the industrial metaverse approach here, we want to really bring all these different applications on a centralized platform on the cloud and stream it to all kinds of users in the hospitals, for example, so that they can access the data. The data stays secure on the server side and it's very simple to just dive into the experience and use it.

MH: Tell me about that infrastructure component to it because there would be multiple ways at which you could develop a metaverse environment. One of them would be a dedicated app where everything is held on the device, but what Holo-Light is doing is more streaming-oriented and cloud-specific. Why go down that route?

LB: For us, the answer is very simple. The XR devices that we see in the market today, most of them are nowadays every device is mobile. This means that also the performance of these devices is limited. You cannot visualize data out of the box in high quality. So, companies like BMW for example, if they want to visualize an engine, they cannot do that. They have to prepare the data before they can visualize it. And with streaming, they don't have to do this, they can use data out of the box. It's the same with CT scans, with building information, modeling data. With realistic game assets in the future, it's the same.

We add the performance component from the cloud and bring the performance to the XR devices, to improve the experiences overall. And furthermore, as the data and the entire apps are on the server side, the data is more secure. It's not on the XR device side. In case, for example, device gets lost, the data is still protected. The idea is also to centralize XR applications because I think every company knows several AR/VR applications that live like island solutions in these big corporates, and this is not the way to scale XR use cases. I think this one platform approach with this technological streaming background is really a perfect setup to scale XR use cases in the right way and to make sure that quality and simplicity of usage are given.

MB: I find that interesting metaphor is the streaming industry, generally speaking. At one time there was just one, it was Netflix, and then there was an explosion of these types of streaming services. And it wasn't until a company like Apple came along and put all of those different streaming services on one single screen where you didn't have to worry about which app you were using and how you're going to get to it. Suddenly the consumer was more willing to engage in streaming services.

LB: Yes, I think here at Holo-Light, we also use video on demand services. And we are absolutely familiar with the concept behind it, and we wanted to think in a similar direction. I think nobody today wants to buy movies individually, except you're like a really big fan. But usually, you don't do this because you don't want to have the storage on your computer. You don't want to download it. It takes just too long. To consume in that way is just much better and it's also more cost effective in the future because the subscriptions that you pay nowadays for these things, they're really low compared to the price of an individual movie that you bought in the store before.

And I think it's similar in the AR/VR environment because, if apps are running on the cloud, then you don't need to build up the infrastructure on your own site. You can go, and we have companies they go with 5G campuses, they go with Edge computing solutions, with others that want to go with the cloud because they rely on AWS, Azure, or Google. And I think this flexibility also for the enterprises is really important to set up an infrastructure where they feel comfortable and where they really believe that they are able to use this technology in the future.

MH: Your point about 5G in Edge cloud is well received because these are two technologies that go hand in hand with this kind of development. Particularly as we expand the capabilities of the hardware, we want to offload the processing of AR/VR into the cloud and the ultra-low latency of 5G, I can imagine, is critical to success.

LB: It's definitely pushing also our technology. We can use streaming technologies already today in 4G infrastructures for example. It's not like it would not work today, but it's all about having a real time experience on your XR device. The lower the latency, the higher the bandwidth, especially with a increasing number of users at the same time, this becomes really critical. The combination of 5G and our streaming technology, industrial metaverse itself, there's no alternative.

I think in the future this has to work together. And the good thing is that we are collaborating, obviously, today with companies like Nokia, like Telecom and these others. They provide the infrastructure; we provide the softer part and the platform part and I think this is a good combination and this helping enterprises to find a good infrastructure where they can put their solutions on.

MH: That's an interesting point about scale. The importance of being able to create this kind of content, where hundreds, thousands of people can simultaneously be accessing it. And it makes me think more about the enterprise side of the equation. Gartner Peer Insights reports that the most compelling use case for enterprise is training. The ability to have hundreds or thousands of people simultaneously learning how to use a thing or get onboarded within an organization. It seems to make VR based training certainly compelling.

LB: Yes, I would say when we talk to companies and ask them, "Hey, what use cases do you have? What things really work? Where is acceptance from the employees?" And then training is for sure number one, number two, sometimes. And there are reasons for that. VR devices, most people are now familiar with the technology, with the hardware, it's cheaper. For around €500, or dollars, you get a device. And training is such a great use case because everybody who's new in something needs to learn something and we are all learning a lot. And this technology just makes it easier to learn.

It's better than reading a PDF or than watching a video. It's experiencing things. It's closer to reality, I think, and that's the reason why VR training is successful. But there's obviously a need to scale it in the right way because I know many companies, they actually at the moment thinking "how can we make sure that these thousands of users, wherever they are, can really access these different trainings?" It's a big challenge and I think the industrial metaverse, as a concept overall, can really help to realize these things and make sure that it runs smooth also.

MH: Meetings are the second most compelling use case for the metaverse. In the old days, we used to complain that the meeting could have been an email. Today we complain, this Zoom call could have been an email. How do we ensure that a metaverse meeting is compelling enough to avoid the same complaint?

LB: I think it's always about using the things in the right way. It's good that we have one more option in the future. We also think that VR is not replacing emails, it's just another way of communicating with each other. If we just think about Teams sessions or Zoom sessions, they make sense if you use it in the right way. If it's just updating each other with one or two sentences, I can do that in an email.

I know many Teams meetings where, I don't know, 20 people are joining, three people are speaking, so I don't know whether this was the right medium to do it. But in the end, I think what VR can do and what AR can do it can replace some onsite meetings. And I think it's just the fact that we will have more virtual meetings in the future, and therefore we need this additional option to see each other, to really not just hear each other and see a face, but really see the interaction. And it's not about just the people interacting with each other but interacting with additional content. And this content is not just always 2D, it's 3D and therefore I think it's relevant to have this opportunity. And we will see in the future how the split will be. How much of your day you really use which tool? I'm really excited about that.

MH: What about the importance of standardizations? You say it'd be interesting to see which tools people use. Even within the 2D world, you've got Zoom, you've got Teams, you've got FaceTime, you've got all these different competing technologies that aren't running on the same standards. How important do you see standardization to the path to industrial metaverse?

LB: Yeah, I think standardization makes things much easier because it's a huge effort to support different XR devices. I think it's also about compatibility in the end, and I think this is something I want to highlight also and something that where we also at Holo-Light want to help, because we've done many projects in the AR/VR industry and whenever someone ask, "Hey, can you bring this application also to another XR device?" We were like, "Oh, okay, yes." It might also be Unity based, but we are using this SDK and maybe it doesn't run on the other device. What we want to guarantee if someone is using our streaming is that he can bring one application to also different XR devices, because there are devices which fit better for a use case, but there are also sometimes different opportunities.

Sometimes you can stay at home and use a VR device while someone else is on site and using AR glasses. And I think we just want to leave it up to the user to decide which device he or she wants to use. I think this is a good example for the standardization, the more companies work together and make sure that their technologies and devices are compatible, the easier it will be for the users in the end to make sure that they can set up a good infrastructure and that they are not so bounded to just one provider.

MH: Are we seeing progress on this front? Or is it still a 'wild west' where everybody's trying to come up with the best way of doing something?

LB: No, I would definitely say that we have improved there. I mean, there are forums where the big corporates, the other providers, are coming together. I think just yesterday I read news about Microsoft and Meta working together and also the new Meta device supporting Office and some other things. From the hardware side, it's there. And from the software side too. I mean, we work together. We work on different engines. We work with devices.

And I think, as a software provider, it's really relevant to make sure that you are compatible with these things. I see it's much better than five or seven years ago. But I would also say that there's still some work to be done because with every new update, you need to make sure that your software stays compatible, and it is effort. And it's not like it works out of the box always.

MH: Two out of three B2B companies surveyed say they're currently educating themselves about the possibilities the metaverse provides. How would you recommend they go about that education?

LB: We should not forget that it's still a new term. And I think the metaverse is a combination of existing technologies maybe brought to the next level, and also connected to existing programs and databases that companies already have. We shouldn't think of it as something that is super fancy or so far away. I think that there is a journey to the industrial metaverse, and companies should understand the basic technologies behind I, what AR can do. You have to know what VR can do. You need to be familiar with other technologies around it.

And if you understand each individual technology, you are also able to understand the concept of the metaverse in a very good way. And obviously people have to start, go on maybe to fairs, get some first experience with it, but also, I think it's difficult to understand the differences because not every metaverse approach is the same. And there is the challenge. It's not like there's one definition and that's it. There are many different ways of how you see it, and that's why it's relevant to definitely talk about it now. But use the technologies behind the metaverse and understand them in a better way. I think this will help companies a lot in being successful with the industrial metaverse.

Want more insights?

Infographic