The automation path towards brighter, more responsive optical networks

The volume of machine-to-machine digital traffic is about to overtake that of user-to-user and user-to-machine digital traffic, with service-implementing servers as the predominant contributors. Propelled by technologies such as 5G, machine learning and software-defined networking (SDN), networks are getting closer to being seamlessly united with information technology (IT), creating infrastructure that's more responsive to the orchestration of bandwidth-demanding services and that supports continuous service innovation.

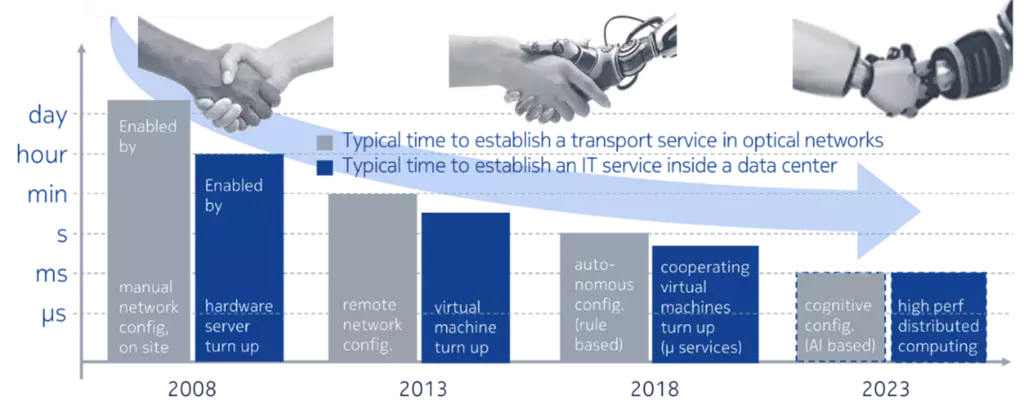

Optical networks will underpin this new infrastructure, so they must also be made more responsive through automation. As shown in Figure 1, the innovation required to make optical networks more responsive could be within reach in the coming years. Soon, a lightpath (i.e. wavelength channel) will be able to be activated as quickly as setting up transient application connectivity inside a data center.

Figure 1: Convergence of typical service dynamics in optical and in data center networks (source Nokia Bell Labs)

The trends depicted in Figure 1 suggest opportunities for massive transformation, where the IT and network domains will work as one to create a responsive compute, storage and network infrastructure. Why should computer jobs process data in a single server if data can be moved everywhere at any time? Why should networks remain unchanged for years if they can be automated and reprogrammed as easily as computers? In the new more responsive digital infrastructure, both domains will inherit the best from each other to push challenges aside.

The question of dependability and resilience will play a central role as IT and the network are united. It is acceptable to reboot a computer after a software or hardware crash, but a failure in an optical network can destroy petabits of valuable data. With respect to outage probability, today’s datacom networks are five to ten orders of magnitude less dependable than telecom networks and have no guaranteed waiting times for data delivery. Datacom flows can wait in queues for a long time if resources are unavailable or if they need to be resent after a loss. Some applications can operate with these types of limitations, but time-sensitive applications cannot (e.g. those involving the real-time cooperation of machines or users).

In uniting the good attributes of the IT world with the network domain, it is essential to ensure that the resilience of networks remains unharmed, at least for the data flows which demand it. This is particularly important for infrastructure automation. Proper risk management will be a key to success for innovations in automated network operations.

What do operators need to make more responsive optical networks a reality?

To start, operators need to gain the trust of all automation mechanisms. These mechanisms combine three basic ingredients: models, sensors and actuators. All three must be made completely trustable to support the automation required to create reliable, responsive networks.

Models fed by real-time sensor readings

Models are the foundation for automation mechanisms. To capture the complexity of a network, a model should be like a digital twin of the network. In other words, it should be an abstraction that connects the physical topology, the underlying service components (wavelengths, allocation plan, bitrate, modulation format), and the characteristics and settings of the components (amplifiers, fibers, terminals, nodes). Its aim should be to help operators automatically predict the quality of transmission (QoT) performance required to support services before they are turned on.

More static digital twins of physical fiber infrastructure have been used commercially since the invention of wavelength division multiplexing (WDM) systems, mainly in software tools for planning resources. These models were later expanded to create more dynamic control planes and revisited when coherent systems were generalized. Realizing the goal of a more responsive network calls for a greater focus on accurately assessing all network models to ensure that they support the required optical network automation. No model is perfect. But any residual uncertainty must be known and fully owned before a trustable automation mechanism can be created.

While it’s tempting to integrate proven propagation equations to replicate fiber propagation, computing them takes too long. It’s also hard to scale them to predict the performance of thousands of lightpaths (i.e. wavelengths) in the required timeframes. Analytical models that are simpler to consume, such as the nonlinear phase model or the Gaussian noise model, are less accurate but quick substitutes.

Future posts in this blog series will explore a new family of models that leverage machine learning —and that are capturing massive research interest. Like the models that came before it, this new family needs to come with a confidence interval to prevent unexpected outages during network operation. Extra care should be taken with rare outlier physical events such as fiber breaks, storms, lightning strikes and power surges.

Building on machine learning, artificial intelligence (AI) can support even more dynamic digital twin models by learning from the network in a few days or months. However, it can only interpolate or extrapolate from configurations it has seen before. Human intelligence and intuition might not learn as quickly as AI. But humans have the advantage of being able to accumulate lessons learned over time and adapt them to teach others. For centuries, scientists have stretched their models by incorporating outlier events. They deserve priority for many critical decisions.

Creating trustable input parameters

Models are fed by multiple input parameters, such as network subpart features, components and sensor readings. All these parameters should be made as trustable as the model itself, which means that any residual uncertainty must be fully known and owned. Unless more accurate data is available, models typically rely on the suppliers’ specifications and take the worst-case characteristics. As a result, the guarantee of hassle-free network operation is paid for with inefficient, and in some cases massive, overprovisioning of resources.

New telemetry capabilities eliminate the need for overprovisioning by gathering massive sensor readings. Coherent transceivers based on digital signal processors (DSPs), such as the Nokia PSE family, can feed models by uploading multiple, extremely precise, sensor readings on the physics of propagation, environmental conditions, and multiple versions of performance or network health gauges. As time goes by and environmental conditions vary, telemetry recordings jump from one value to the next. Dependable automation requires that they stay within known boundaries.

The sensor readings should therefore all be certified, within a known and guaranteed confidence interval across all possible conditions of use. Such certification should become as commonplace in our industry as it is with every metrology instrument. In addition, all uploaded telemetry indicators need to be timestamped, contextualized appropriately and postprocessed to narrow down the standard deviations as recordings accumulate. Historical trends will be extracted, and aging trends extrapolated from them. Machine learning can help narrow down uncertainties – for example, by classifying fiber types and thereby auto-discovering the fiber infrastructure.

Creating trustable actuators

Actuators must also be made as trustable as sensors. The range of mismatch between the desired state of the actuator (set by the associated control command) and the actual state should also be known in advance and certified by the manufacturers across all possible conditions of use.

Automation made safe

Uncertainties from the model, sensors and actuators will accumulate with every automation mechanism. But trust should never be at risk. An important rule of thumb – as important of Asimov’s Three Laws of Robotics – is that the loop should not be closed, either manually or automatically, if uncertainties exceed the magnitude of the change predicted by the model. As an analogy, doctors who send patients with temperatures above 38°C for costly tests could waste healthcare resources on patients with an actual temperature of 37.5°C if they use thermometers with an uncertainty greater than 0.5°C.

The journey toward more responsive networks is underway

As we’ll discuss in subsequent blog posts, the journey toward more responsive automated optical networks has begun, with innovative new approaches driving it. Operators can radically transform resource allocation, resource optimization and resource repair by harnessing the power of automation to lower network total cost of ownership (TCO) and grow revenue. This journey won’t happen in a day, but it also won’t require expensive infrastructure upgrades. A stepwise approach towards a more responsive network is feasible. It will make the incorporation of all changes possible at the pace of the market’s readiness without losing any of the accumulated benefits.

Steps for just enough resources

To preserve investment cash until it is needed, network operators could move from 15-year-ahead to one-year-ahead resource allocation, or to allocation in the next maintenance window. To realize the potential of unused network resources, they could gradually move from today’s mostly on-off health assessment of lightpaths to an analog health assessment as new actual telemetry readings (not worst-case planning) become available. To further preserve CAPEX, operators could move from all-greenfield resource upgrades to brownfield upgrades where new resources are allocated and configured for more performance based on the behavior of transceivers that are already installed.

Steps for optimizing the settings of network resources

The first steps are to gain experience with and develop trust in automation algorithms. The network operator builds trust by cross-checking to ensure that the measured performance of every manual action falls within the performance range predicted by a network digital twin, including safeguard margins. It can then narrow down the safeguard margins as the digital twin shows gains in accuracy. Finally, it can close control loops, sidestepping manual intervention. However, operators need to monitor control loops for uncertainties that exceed predicted outcomes or correlation with any network alarms. Decisions that are seen to be critical must offer the flexibility to support cross-checking by humans.

Steps to repair connections instantaneously

Mastering uncertainties carefully can help reduce overprovisioning without exceeding the maximum probability of network outage set by the operators. However, the increased number of reconfigurations could come with an increase of component failure rate that is not yet fully known. Although system designers have devised specific workaround solutions to handle this risk, the digital twin comes with immense opportunities for quick troubleshooting and fast repairs. When combined with alarms, the new telemetry data will provide insight on the root causes of failures, which can be proactively detected or corrected in a way that has never been possible before.

More insight on the path forward

The path toward more responsive networks has opened, and a more united IT and network infrastructure is on the horizon. The next posts in our blog series will provide more insight on how operators can enable optical networks to be smarter, or shall we say brighter, in addressing the capacity needs of a more responsive, insight-driven network.

Learn more

- Application note: Insight-driven optical networks: Automating networks with innovative business and network insights

- Application note: WaveSuite Network Insight: Harnessing the power of machine learning and automation to lower network TCO

- WaveSuite Network Insight Health & Analytics data sheet

- K. Christodoulopoulos, C. Delezoide, N. Sambo, A. Kretsis, I. Sartzetakis, A. Sgambelluri, N. Argyris, G. Kanakis, P. Giardina, G. Bernini, D. Roccato, A. Percelsi, R. Morro, H. Avramopoulos, P. Castoldi, P. Layec, and S. Bigo, “Towards Efficient, Reliable and Autonomous Optical Networks: the ORCHESTRA solution”, IEEE/OSA J. Opt. Commun. Netw., Vol. 11, n°9, C10-C24, 2019

- E. Seve, J. Pesic, C. Delezoide, S. Bigo and Y. Pointurier, "Learning process for reducing uncertainties on network parameters and design margins", IEEE/OSA J. Opt. Commun. and Netw., Vol. 10, N°2, pp. A298-A306 (2018)

- P. Jennevé, P. Ramantanis, N. Dubreuil, F. Boitier, P. Layec, S. Bigo “Measurement of Optical Nonlinear Distortions and their Uncertainties in Coherent Systems,” in IEEE/OSA J. Lightwave Technol., vol. 35, no. 24, Dec. 2017