Don’t let the data center network stall your government services and agency missions

Governments have the responsibility to serve and provide for their citizens, defend the nation and invest in economic growth. Accomplishing these goals in the era of big data, 5G and IoT means that they need to fully harness the power of ICT to gather, process and analyze a gargantuan amount of information.

Whether delivering e-services to today’s internet-savvy citizens or supporting government employees working from home, governments need to upgrade and modernize their IT infrastructure to support the cloud. In particular, they need to make compute resources agile, scalable, portable, efficient and elastic while keeping costs in check. In 2019, responding to this modernization challenge, the US Federal Government refreshed its cloud computing strategy, moving beyond the original 2011 Cloud First initiative and transitioning to Cloud Smart, which provides implementation guidance to actualize the promise of the cloud.

At the center of cloud computing and big data are data centers. They are the engines of this revolution. You may be surprised to learn that they have actually been around as long as mainframe computers. Many consider the first data center to have been the computer room built in the 1940s for the US Army’s ENIAC (Electronic Numerical Integrator and Computer), a general-purpose computer with no transistors but 18,000 vacuum tubes. Today’s data center is a dedicated facility or space to house compute equipment (known as servers) and storage. It is where applications (or workloads in IT jargon) are run and data are processed, analyzed and stored.

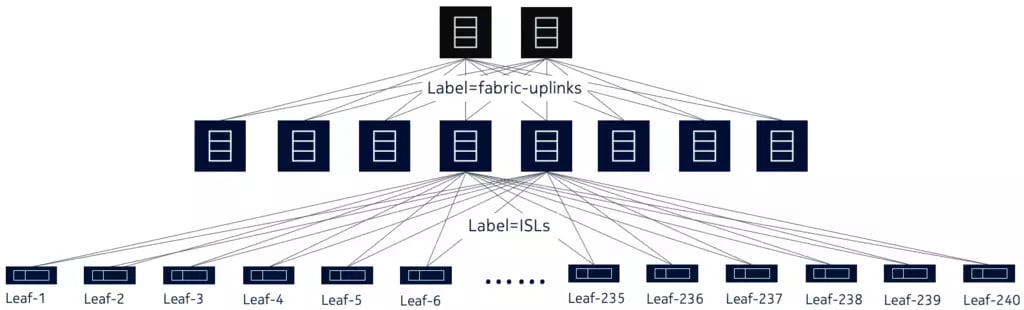

The network that connects servers and storage is foundational to the data center. It is commonly known as the data center fabric - or simply the ‘fabric’ - because it interlaces any-to-any connections among servers and storage in a mesh, similar to weaving a piece of cloth fabric. It enables applications residing on servers to communicate with each other (a.k.a. east-west traffic) as well as with clients or users outside the data center (north-south traffic).

While you are filing your tax return or enquiring about your pension online, the application is busy crunching data transported by the fabric from one server to another, as well as to your computer. An underperforming fabric can become a performance bottleneck, slowing down e-government services and impacting critical applications used during agency missions.

Figure 1. A typical mesh network for a data center

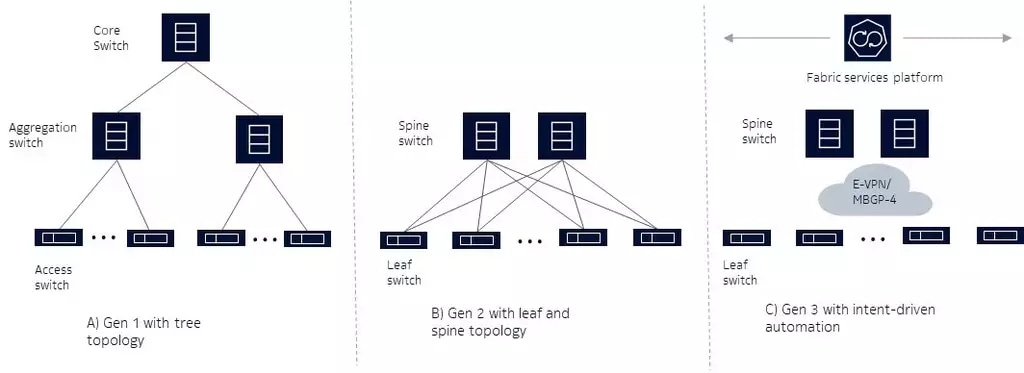

The data center network has not always been a fabric. When enterprises started to build data centers, the network resembled more of a tree topology. Based on the enterprise LAN blueprint, it was a three-tier Ethernet network with access, aggregation and core layers. From today’s vantage point, we call it a generation 1 (Gen 1) network (see figure 2A). Using VLANs and spanning tree protocol (STP), its main purpose was to transport north-south traffic. IP routing was rarely used within the data center, so the whole network was just one big broadcast domain.

With no telemetry support on the LAN switches (other than basic SNMP access to monitor port status and other rudimentary parameters), IT administrators had very limited visibility into network operating conditions. With no application programming interface (API), they had little control of the network to programmatically react to data center traffic changes. Nevertheless, as the compute environment was centralized and static, the network worked well enough — with dedicated servers running applications developed with a centralized architecture, using single IP and Ethernet MAC addresses.

Figure 2. Three generations of data center network architectures

Pressure, however, soon mounted on Gen 1 networks. In the early 2000s, software developers started to write applications using a distributed architecture with different modules residing on different servers. With the advent of the World Wide Web, some servers were dedicated to handling http requests (front-end processing), while other servers were dedicated to numeric calculation, database processing and other functions (back-end processing). IT became critical to business operations, so disaster recovery also became a top concern. In order to keep costs in check, IT embraced server virtualization technology. Server virtualization “breaks up” a physical server into multiple virtual ones, each with its dedicated resources such as CPU, memory and IP/VLAN settings.

With the shift to virtualization, data center traffic patterns were no longer dominated by north-south traffic and there was an immense growth in east-west traffic, causing tremendous strain on the three-tiered networks. Depending on server location, traffic may need to traverse just one hop through the attached access switch, or it may need to travel multiple hops — for example, up from an access switch to an aggregation switch and core switch and then back down. This introduces high levels of latency and complicates network capacity planning. Moreover, with server virtualization, the number of compute endpoints explodes, straining the VLAN scalability limit of 4,000 VLAN IDs.

IT administrators overcame the Gen 1 shortcomings by evolving to a second generation (Gen 2) network that has a two-tiered leaf-and-spine architecture that uses purpose-built, high fan-out switches with a limited level of IP routing capability (see figure 2B). The data center network became a fabric, with every leaf switch connecting to every spine switch, bringing fully meshed connectivity to all virtual compute endpoints.

To overcome the VLAN scalability limitation, virtual extensible LAN (VXLAN) tunnels supporting up to up 16 million VXLAN network IDs were introduced. To eliminate bandwidth bottlenecks, multi-chassis LAG (link aggregation groups) can also be used between leaf and spine switches.

Gen 2 leaf and spine switches improve on Gen 1 switches by incorporating some telemetry capabilities and APIs. These allow IT administrators increased network visibility and programmatic control. However, they lack the total openness that allows IT to harness their DevOps environment to plan, configure, validate and fine-tune the fabric during day-to-day operations. Despite these shortcomings, since Gen 2 networks are optimized for both east-west and north-south traffic, many IT administrators have deployed them as they adopt server virtualization.

Near the end of the 2000s, another IT innovation appeared — cloud computing. Built on server virtualization and cloud management system orchestration, cloud computing treats all compute resources as a pool of dynamically consumable resources. As demand increases, it responds by scaling out applications (known as workloads in cloud terminology), provisioning new virtual compute resources using a fully automated process as part of IT DevOps.

There is a clear need for US government agencies to evolve their data centers and leverage cloud computing. For example, NASA pioneered the development and use of cloud computing resources to rapidly scale their ability to process space mission imagery and other types of data. Many US government agencies are actively investing in how to smartly and securely build and leverage cloud infrastructure.

This new cloud paradigm requires an equally agile fabric. While it takes only minutes to instantiate new compute resources, it takes hours or days to configure a Gen 2 fabric to connect them. The strain on a Gen 2 fabric is exacerbated by microservices-based applications that make east-west traffic more dynamic than ever. This load on the fabric is exacerbated by the combination of ever-increasing mission-driven data and the advent of IoT and 5G.

These trends are generating a gargantuan amount of data that needs to be processed quickly. If the fabric cannot be reconfigured dynamically to keep pace with data volumes and workload changes, the applications will underperform. This can cause problems ranging from frustrating government and citizen users to jeopardizing the success of missions where human lives are in the balance.

To tackle the challenges, a third-generation (Gen 3) network architecture is emerging in this era of automation (see figure 2C). Grounded in VXLAN, full-fledged IP routing and the leaf-and-spine network topology, the Gen 3 architecture harnesses the robustness of the BGP routing protocol and the versatility of EVPN.

Learning from Gen 2 limitations, Gen 3 embraces a totally open approach, which seamlessly integrates the fabric operation with the DevOps environment. This allows for the development of network automation capabilities and other native network applications (NetOps). For example, instead of working out the fabric design and configuration using primitive spreadsheets and templates, IT can adopt a high-level intent-based approach that takes into account information such as the number of racks and the number of servers per rack. IT can also use application workload intent such as microservice endpoint locations, quality of service and the security policy required.

All these intents can be expressed in a popular DevOps tool configuration language such as YAML to automate fabric design and operation by using a fabric automation and operations platform. When workload intents change, the fabric can be fine-tuned and pre-validated using the digital twin paradigm. Through this intent-based approach, network automation can be achieved. Additionally, Gen 3 switches use open, standard Linux-based network operating systems (NOS) with full telemetry visibility. Taken together, these advances in the Gen 3 data center network fabric allow IT administrators to manage the network just like they manage their applications and compute resources.

Government today operates in a data-centric world. Fully harnessing the power of IT technology is pivotal to deliver efficient services, improve effectiveness and keep the nation safe and competitive. The data center is the central piece of IT infrastructure and the data center fabric is its cornerstone. Many US Government data centers need to move to a Gen 3 architecture to keep pace with the rapidly-evolving data crunching and analytics requirements.

To learn more about how Nokia’s Data center fabric solution can help you keep one step ahead of ever-changing data and traffic requirements, register for our upcoming Webinar: Present and future data center network technologies to meet the mission-critical needs of defense.

Also visit our US Federal web page to understand what other solutions can do for you.