Data center networks

Turn your data center into the nerve center of the AI era

Why choose Nokia for data center networks?

The data center landscape is evolving rapidly to meet the demands of the AI-era.

We help the world's most innovative companies transform their data centers, unlocking new revenue streams and boosting operational efficiency with our advanced data center networking solutions.

Nokia has an established track record building business and mission-critical networks. Our quality-first philosophy is a big part of why our customers trust us to build data center networks that “just work”.

Brochure

Data center networks for the AI era

What are Nokia data center networking solutions?

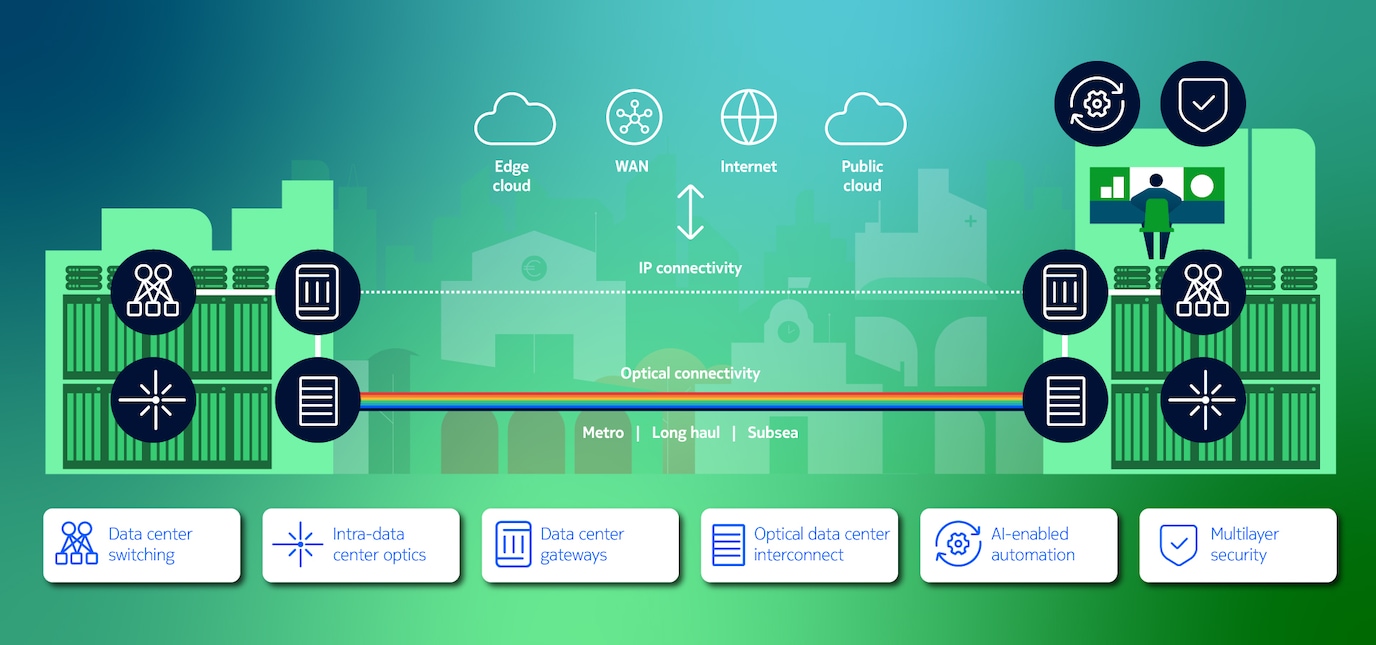

Our data center networking solutions include high-performance switches and optics that ramp up connectivity inside the data centers, and IP and optical technologies that interconnect to, from, and between data centers. Our comprehensive portfolio delivers unmatched performance, scalability and efficiency for the most demanding environments.

Our data center switching solutions, built for “human error zero”, support interface speeds up to 800GE, a choice of NOS options, and a modern automation platform to deliver reliable, simple, and adaptable networking for traditional and AI data centers.

Product

7215 IXS management switches

Build reliable management network solutions for data centers.

Product

7220 IXR low latency switches

Build open and automated data center fabrics with high-capacity, fixed-configuration platforms.

Product

7250 IXR deep buffer switches

Deploy high-density, high-capacity data center switches for an open, leaf-spine architecture.

Product

SR Linux NOS

Evolve your IP and data center networks with an open, extensible and resilient NOS.

Our vertically integrated, versatile intra-data center connectivity solutions enable operators to scale compute capacity while minimizing power consumption.

Solution

ICE-D intra data center optics

Ultra-low power and scalable optical connectivity solution optimized for intra-data center connectivity in the AI era.

Our data center gateway enables high-performance connectivity between data centers, public clouds, the wide area network (WAN) and the internet over any transport technology.

Solution

Data Center Gateway

Deliver reliable, scalable and secure data center interconnect and peering

Product

7750 Service Router

High-performance IP edge and core routers.

Product

Service Router Operating System (SR OS)

Rely on exceptional IP network performance with our proven router software.

Our flexible, application-optimized optical DCI solutions deliver the scale, security, and resiliency essential to support a wide range of data center connectivity needs.

Solution

Optical Data Center Interconnect

Maximizing network infrastructure ROI in the era of AI.

Product

1830 Photonic Service Interconnect – Modular (PSI-M)

A compact modular platform that delivers scalability and flexibility to optical networking and DCI applications.

Product

1830 Photonic Service Switch (PSS)

Engineered to handle the unique traffic conditions of scale out AI/ML training and inferencing workloads.

Industry recognition

Nokia Validated Designs (NVD)

Validated for production, built for automation, open for collaboration.

Customer success stories

Learn more about data center networks

Critical connectivity for modern data centers

Learn more about the critical role of the network in data center evolution to enable the cloud transformation in the AI era.

Data center networks news

Keep up with the latest news around data center networks.

Data center networks blogs

Explore our latest blogs on high-performance data center networking solutions.

Ready to talk?

Disclaimers:

GARTNER is a registered trademark and service mark of Gartner and Magic Quadrant is a registered trademark of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

Please complete the form below.

The form is loading, please wait...

Thank you. We have received your inquiry. Please continue browsing.