Our blog

Discover networking innovations transforming the world

Featured blogs

The illusion of internet resilience

2025 cloud outages and massive DDoS attacks reveal the internet's fragility and the need for rethinking network security

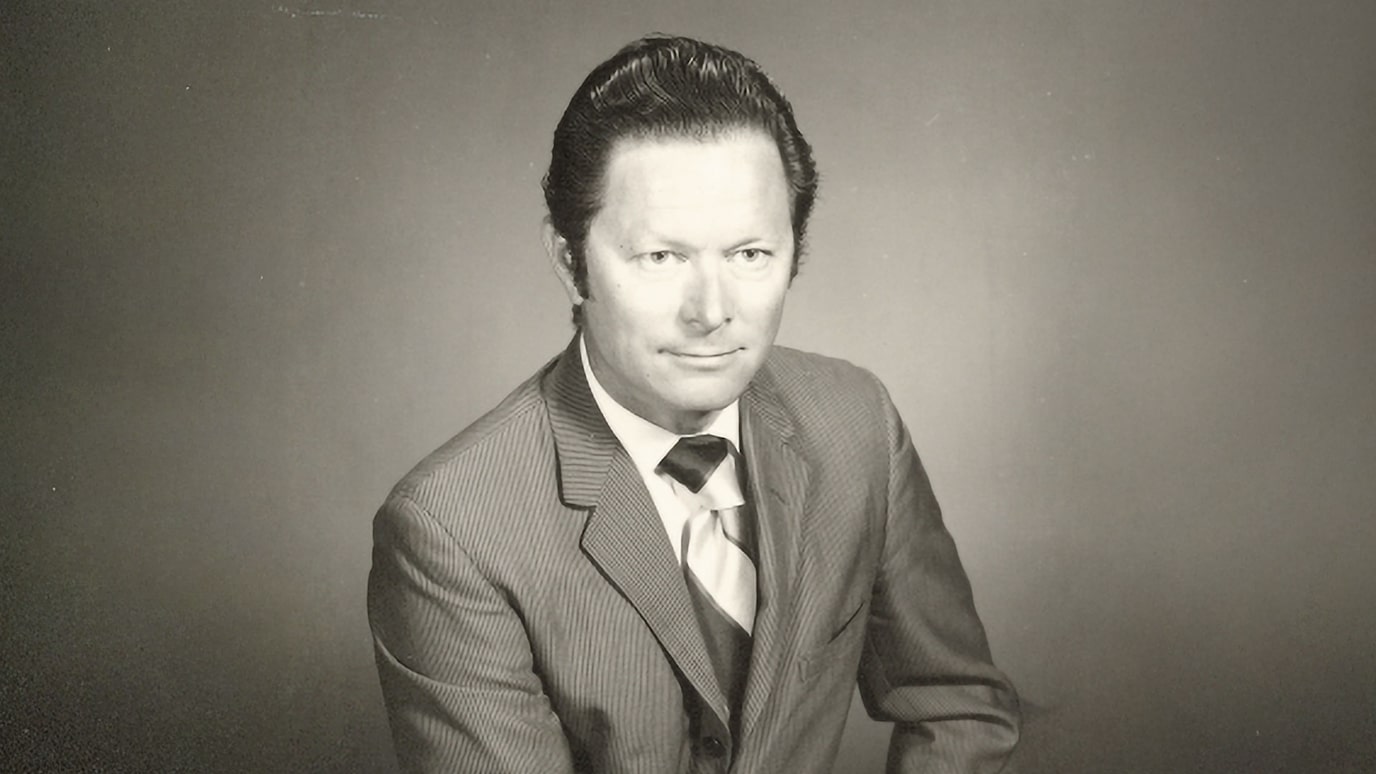

Nokia deepens commitment to open innovation as AI initiatives scale in the data center and to the edge

Nokia is proud to be a part of the OCP ecosystem, guiding the evolution of the AI supercycle with standards for open innovation.

Latest blog posts

Networks

19 Dec 2025

18 Dec 2025

17 Dec 2025

17 Dec 2025

16 Dec 2025

15 Dec 2025

12 Dec 2025

12 Dec 2025

Innovation

Nokia Bell Labs

Enterprises

Partners and licensing

Sustainability

Company

19 Dec 2025

18 Dec 2025

17 Dec 2025

17 Dec 2025

16 Dec 2025

15 Dec 2025

12 Dec 2025

12 Dec 2025

11 Nov 2025

10 Nov 2025

3 Nov 2025

27 Oct 2025

20 Oct 2025

16 Oct 2025

14 Oct 2025

24 Sep 2025

15 Dec 2025

3 Dec 2025

14 Nov 2025

7 Oct 2025

26 Sep 2025

25 Jul 2025

9 Jun 2025

5 Jun 2025

3 Dec 2025

12 Nov 2025

24 Oct 2025

15 Oct 2025

13 Oct 2025

6 Oct 2025

3 Oct 2025

22 Sep 2025

11 Dec 2025

27 Oct 2025

28 Apr 2025

1 Apr 2025

26 Feb 2025

17 Dec 2024

27 Sep 2024

9 Sep 2024

4 Dec 2025

6 Nov 2025

30 Oct 2025

16 Oct 2025

2 Oct 2025

18 Sep 2025

27 Feb 2025

28 Nov 2024

10 Oct 2023

8 May 2023

23 Mar 2023

13 Mar 2023

7 Jan 2022

21 Dec 2021

22 Oct 2021

30 Sep 2021